A new analysis tied to startup Oumi has raised fresh questions about Google’s AI Overviews. The study found that the feature answered about 90% of test prompts correctly. That still leaves 1 in 10 answers wrong. At Google’s search scale, that error rate could translate into tens of millions of inaccurate answers every hour.

AI Overviews sit at the top of Google Search and present answers in a confident, summary-style format. That makes even a small error rate feel bigger. Based on approximately 5 trillion annual queries on AI Overviews, the error rate could exceed 57 million incorrect answers per hour.

Such stats indicate that the AI-powered system is possibly spreading millions of pieces of misinformation per hour when scaled across Google’s traffic.

Oumi’s Analysis of Google AI Overviews Answers:

Oumi reportedly began running the test last year, when Gemini 2.5 was Google’s strongest model in the system. The analysis used SimpleQA, a factuality benchmark comprising more than 4,000 questions with verifiable answers.

The benchmark later showed 91% accuracy after the Gemini 3 update, which is better than before but still not perfect. The report also gave examples where AI Overviews confidently picked the wrong answer from conflicting sources.

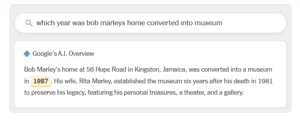

According to The Times, one example of the flawed answers from Google AI Overviews is misinformation about the conversion of Bob Marley’s home into a museum. AI overviews distinctly depicted that the phenomenon took place in 1987. Whereas it actually happened on May 11, 1986, which also marks the fifth anniversary of Mr. Marley’s death.

Source: The New York Times

How Did Google React?

Google disputed the study’s conclusions and said the findings are flawed. According to Ned Adriance, Google’s spokesperson, SimpleQA contains incorrect information and does not reflect what people actually search for on Google.

While speaking with The Times, Ned Adriance stated, “This study has serious holes. It doesn’t reflect what people are actually searching on Google.”

The tech giant has further stated that it uses a different benchmark, including SimpleQA Verified, which relies on a smaller set of more carefully checked questions. What Google is trying to highlight is that the test may not represent real-world use, even if the error rate still looks concerning.

The findings have raised serious concerns about the accuracy of AI Overviews. Alongside that, it also questions whether users should trust such information or not. This is an alarming warning that AI can make mistakes, so users should double-check responses.

Stay current with every tech advancement and finding with YourTechDiet!

You May Also Like To Read:

Google Ads AI Essentials 2.0: Know the Fundamentals for Effective Advertising and Business Growth